Decapitated Robot HEAD i.e. “dread”

November 2015

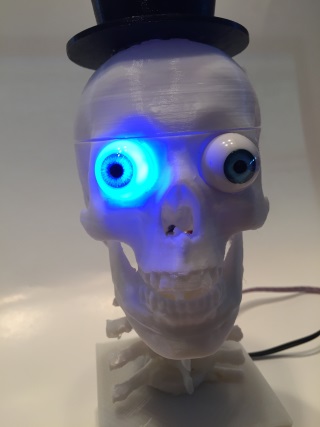

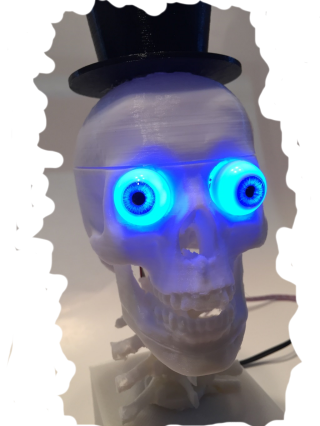

The basic goal of DRHEAD is to have fun with a decapitated head.. wait, that really doesn’t sound right does it. Anyhow, that is what he is and he is something silly and simple at first but with options to grow, DRHEAD has a little bit of entertainment built in from just his looks alone. Pretty handsome feller no? He is really just a clone of my old Box Head robot and uses the same basic code but still provides some fun and entertainment.

The Story

I had a printed this skull and tophat before Halloween back in 2014 but never did anything with it. By the time the eyeballs I bought for it arrived from across the big pond I had lost interest before anything developed. With Halloween 2015 approaching I thought he needed brought to life.

He uses the Box Head core code for voice recognition but I really didn’t like any of the Microsoft SAPI5 voices (yes the master PC is an old XP laptop) for text to speech for him. I didn’t want to reuse the Sam voice, as that is Box Head’s personality and the other old SAPI5 voices just didn’t fit. I finally found the eSpeak app (http://espeak.sourceforge.net/) and by changing the pitch a bit came up with something that seems rather “DRHEADish” to me. It’s a little slow responding as I am firing off the command line version outside of the VB.net app to talk, but it works.

I needed a stand for him and ran across another skull and spine model. I liked the skull I had better and after printing out the spine it seems to work fine. The other skull would actually be easier to animate however if anyone wants to give it a try. http://www.thingiverse.com/thing:194928

Personality

Basically he is just your run of the mill “blue eyed skull with a top hat”, but the bulging eyes and blinking blue along with the polite but different English voice helps to make him fun. Pretty normal actually. I originally wanted pan AND tilt for his neck but after looking at the printed parts it seemed overly difficult and he really doesn’t have the ability to follow a face or anything so I settled on pan only… or “neck rotation” to be more decapitated head specific.

Having recently read this excellent article on making your robot more human posted on RobotRebels.org by Killer Angel (Read it Here yourself) (OR the RobotRebels.org post here), and thought I’d take a stab at it using RGB LEDs for a blinking simulation. Trust me, you will NOT find out about “human blinking traits using an Arduino” and LED by searching for “Arduino blinking LED”.. you know why. 🙂 So after reading the blinking information in the above robot article I threw together some basic code to allow blinking and it seems to work ok. It really isn’t using any of those specific tips yet but should be able to eventually such as blink before move, etc. The LEDs are NOT hooked to a PWM pin so they are really just on or off but it seems to work.

Configuration and Functions

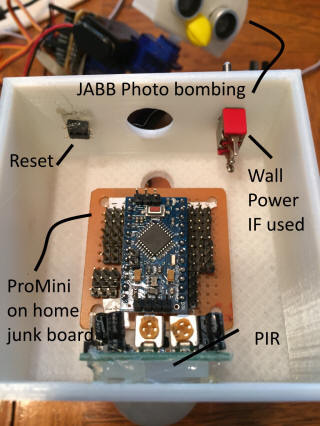

Again, like Box Head, DRHEAD has at least two pieces to the puzzle going on. The PC is listening for voice commands and acting on them by responding to preset phrases, getting data from the web and speaking it, and/or sending commands via serial to the Arduino that is doing the animation. The Arduino does send back results from the commands, but the PC really isn’t doing anything with them at this time. Maybe later.

Using the eSpeak text to speech does work but as mentioned, there is a bit more latency between the command to speak and the actual sounds. Since I haven’t set anything up to use the sound output to animate speech, that created a bit of a challenge for getting some type of jaw animation working. For now the Arduino pauses a bit before either opening his jaw during speech or randomly animating his jaw if you tell him to “Please animate your jaw” to set that mode. Really not even close but hey, he’s a skull for goodness sake, how much can he really pronunciate? One cool thing about eSpeak is the command line can change the pitch and speed of the voice easily as well as the speaking voice base language. I’ve added pitch and speech commands to move things up/down or faster/slower just for fun.

The jaw animation is pretty limited but just getting the jaw to move was more work than expected. When I put the jaw on in 2014 I intentionally used some big paper clip wire up through the jaw into the skull to allow moving it. However, after getting the neck rotation servo inside the skull it was impossible to get another 9g in there. I ended up using a 2.5g servo and found out they are pretty fragile after messing one up trying to get it positioned and “plastic welded”.. ok, whateve, hot glued into place.

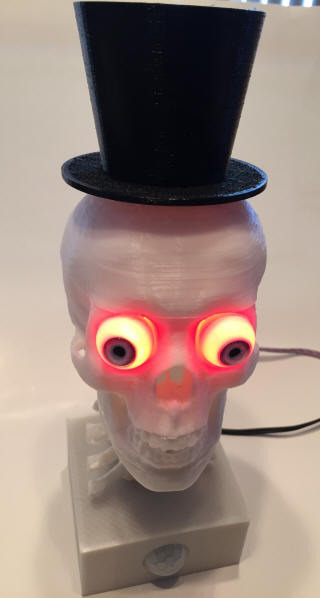

The back of the bulging eyes were cut out… hmm, again that just sounds bad.. but anyhow, they were cut out until the RGB LEDs would fit into the eyes. I like blue so his default eye color is blue although you can change it to red by telling him “Please be angry”… you do actually do have to use the “please” to make that work. He has green eyes as well as shown in the video.

Basic Pin Layout

0 Serial to PC

1 Serial to PC

2 Leaving for maybe future serial to other device like BoxHead

3 Leaving for maybe future serial to other device like BoxHead

4 Neck Rotate Servo

5 Jaw Animation Servo

6

7 Left Eye Red LED

8 Left Eye Blue LED

9 Left Eye Green LED

10 Right Eye Red LED

11 Right Eye Blue LED

12 Right Eye Green LED

13 PIR Input – not used yet

14

A0 FUTURE LDR Input

A1

A2

A3

A4 I2C Left for Future

A5 I2C Left for Future

A6

A7

So what does he really do?

Really right now all he can do is sit around and listen and tell me the status of our work systems, the house systems, the weather, read some headlines, tell me how many days to holidays such as his favorite one Halloween, and control some things around the house like Box Head does.

He has a couple somewhat snappy comebacks from specific questions or if you ask him to do the same thing twice but otherwise he just hangs around and waits for a command. I’ve added some randomness so he’s not always saying “OK”. Pretty simple stuff to just and an interjection function that returns different possibilities such as OK, Sure, Sounds Good, etc. That was actually addressed in the human type robot article as well. There are other locations such as when he is saying “Let me check” that could have more options such as “I’m not sure”, “Well it appears that”, etc.

Next Steps

Dictation Type of Listen Option

I really want to figure out how to jump back and forth in the SAPI component between command and dictation so he (and Box Head) can ask you your name or a specific question and can then parse or speak what he heard. I’m surprised I can’t find any examples that work and I really am not a good enough coder to apparently figure it out myself yet. I know I can never get freeform voice commands to work but by mixing in specific inputs when prompted it could be helpful for interaction. Random Responses and Head Movement

I also want to add some more randomness to the different parts of the responses to make him feel more natural and have settled on feeding my RandomInterjection() function with types such as PREFIX, POSITIVE, NEGATIVE, WAIT, etc. This makes it easy to add new interjections by just adding them to a comma delimited string.

I have code in the Arduino that is supposed to move his head around randomly or wander about a little but it’s not working so I need to work on that as well. When it was working it was too jerky so in my attempts to make it better I apparently broke it. That is on the top of the list to resolve and update.

More Automated Home Interaction

There are many, many things I can add to both DRHEAD and Box Head to integrate with our automated home. Since I developed the front end to the house as a web app, I can easily add to the Box Head control page to control and report on things for current and future “HeadBots” as desired. I do NOT allow them to do certain things like open the garage door or disarm the alarm, etc from a security standpoint but they can turn lights on and off, close the garage door, clear the dryer notice, arm the alarm, tell the house goodnight and various other functions.

Funny thing is I always wanted to add voice controls to the house but never could get it figured out on the HA PC to work across more areas. With DRHEAD and Box Head that is actually pretty easy.

More Sensors

I also need to activate the PIR in his base so he can feed some environmentals back to the PC code so it knows how to respond better. Just know that something is close to him can allow him to wake up himself or make snide comments like Box Head can saying “You are getting into my personal space” when asked.

He could use a LDR or light sensor so he knows if it’s dark or not and be quiet when it’s dark by going to sleep or something similar.

Even thought of adding a cheap compass so he can know which direction he is facing. There are a few pins left and the I2C pins are open so there are lots of options there. Arduino Triggered Events

He and Box Head also need the ability to trigger the PC from the Arduino side. Right now the PC controls the Arduino and polls for a response after a command. I need to add a timer to go check for commands from the Arduino even if nothing is going on in the PC side. That will allow some environmental triggered activities.

Closing

If nothing else at least I used the 3D skull and validated I can move the Box Head concept to another PC and animated head thingy dealy. He’s similar but quite different from Box Head which is good. He’ll likely end up in another room somewhere away from Box Head to expand the voice control options of the automated home and just to provide some entertainment. I’m actually printing out a scaled down woman’s face that may be more fitting for the Anna voice that is in Win 7/8/etc and will likely copy the code over to use more specifically for work junk for the fun of it.